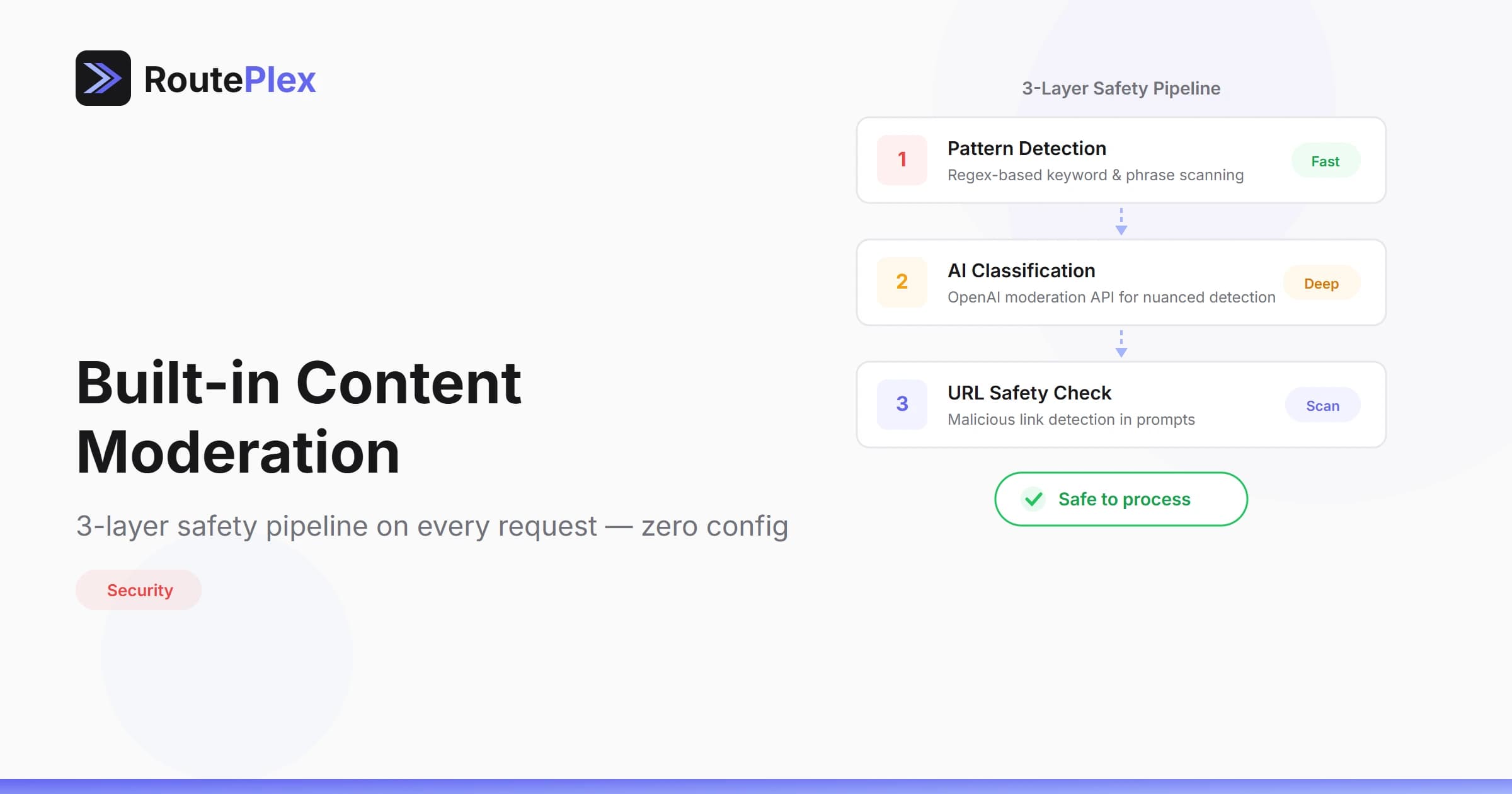

Built-in Content Moderation: 3-Layer Safety Pipeline

Every request through RoutePlex passes through a 3-layer content moderation pipeline before it reaches any AI model. This happens automatically — no configuration, no extra cost, no API flags needed.

Why Moderation Matters

If you're building AI-powered products, you need moderation. Without it, your application is exposed to prompt injection, illegal content generation, and abuse that can create legal liability. Most developers either skip moderation entirely or build incomplete solutions.

RoutePlex handles this at the gateway level so you don't have to.

The 3-Layer Pipeline

Layer 1: Pattern-Based Input Validation

The first layer is the fastest — a regex-based scanner that checks user messages for content that must be blocked regardless of context:

- CSAM / child exploitation — Immediate block

- Violence instructions — Bomb-making, weapons manufacturing details

- Drug synthesis — Manufacturing instructions

- Terrorism — Planning and recruitment content

This layer is intentionally narrow. It does NOT block profanity, political discussion, adult content (non-illegal), or general mentions of sensitive topics. Only genuinely dangerous, instructional content is caught.

Speed: Sub-millisecond. Zero impact on request latency.

Layer 2: AI-Powered Classification

The second layer uses OpenAI's moderation API to perform nuanced content classification. This catches harmful content that simple patterns miss — things that require understanding context and intent.

Only severe categories trigger a block:

sexual/minorsviolence/graphicself-harm/instructionsself-harm/intent

General harassment, hate speech, and adult content are logged but not blocked at this layer — we let the AI provider's own safety systems handle these grey areas.

Key design decisions:

- Fail-open: If the moderation API is unreachable, the request proceeds. We never block legitimate traffic due to a service outage.

- 3-second timeout: Moderation checks never slow your request by more than 3 seconds.

- Free: OpenAI's moderation endpoint has no cost — this layer adds zero to your bill.

Layer 3: URL Safety Validation

When prompts contain URLs (detected automatically by our tool system), the third layer scans them against:

- Domain blocklists — Known malware hosts, piracy sites

- Dark web indicators —

.onionand.i2pTLDs are blocked - URL shortener detection — Flagged for logging (not blocked)

This prevents prompt injection attacks that try to fetch malicious content through our URL fetching system.

No external calls: URL validation uses a static blocklist — no DNS lookups or third-party API calls. Fast and predictable.

What We Don't Block

This is equally important. RoutePlex moderation is not designed to be a nanny. We intentionally allow:

- Profanity and swear words

- Political discussion (all viewpoints)

- Adult content (legal, non-exploitative)

- Discussion of sensitive topics (drugs, violence) in non-instructional context

- Controversial opinions

- Creative writing involving dark themes

Our goal is safety, not censorship. Your users should be able to have natural conversations with AI models without hitting false-positive walls.

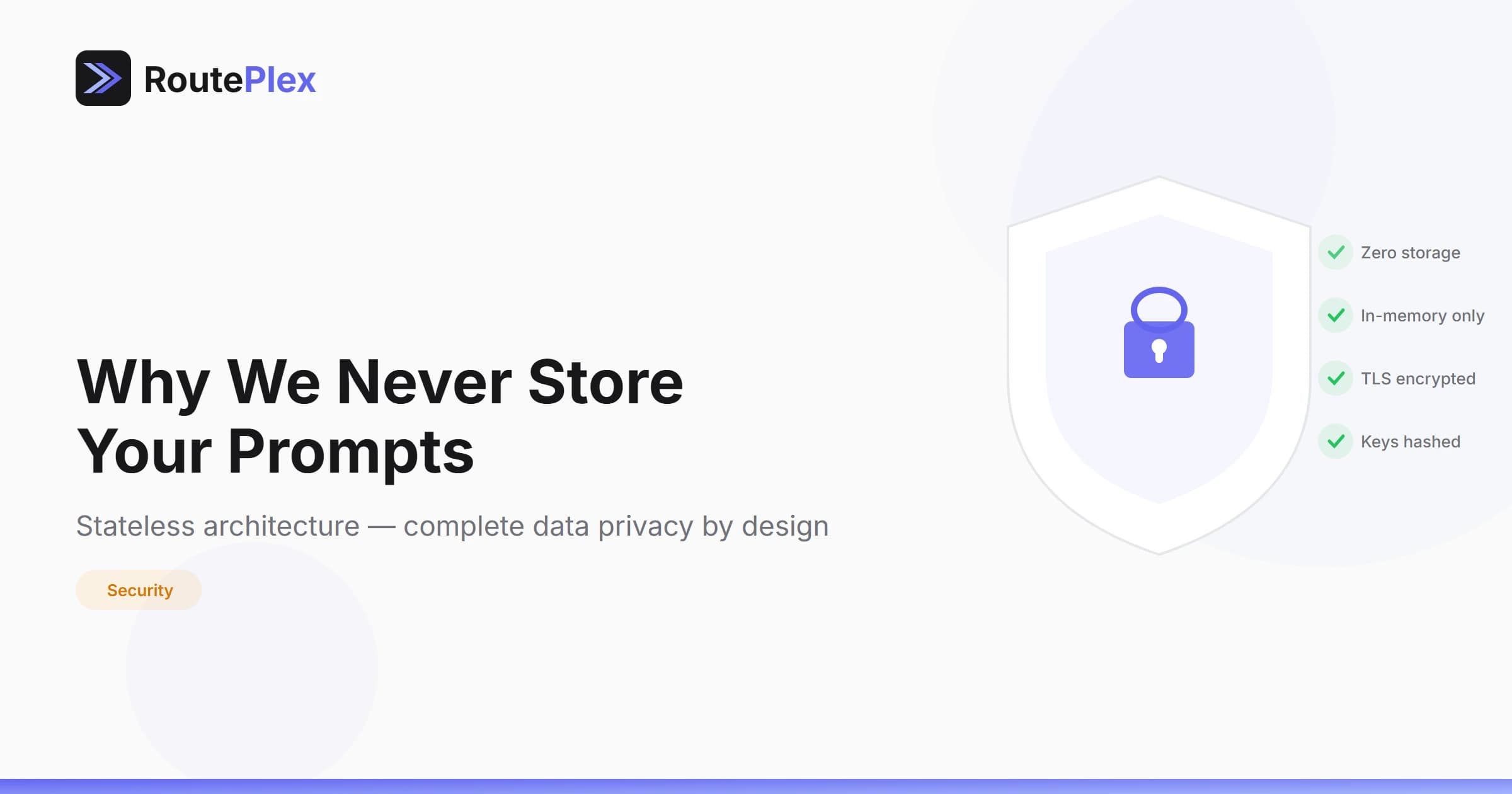

Privacy by Design

When content IS blocked, we never store the raw prompt. The compliance logger records:

- SHA-256 hash of the content (for pattern analysis)

- Which moderation layer triggered the block

- Timestamp and account ID

- Category of the violation

The actual text of the blocked prompt is never written to disk, never logged, and never retained. This is consistent with our stateless architecture — even moderation events don't compromise user privacy.

Generic Error Messages

Blocked requests receive a generic error response. We never reveal which specific pattern, word, or category triggered the block. This prevents adversarial users from probing the system to find bypass techniques.

{

"error": {

"message": "Request blocked by content policy",

"type": "content_policy_violation",

"code": 400

}

}How It Affects Your Integration

It doesn't. Moderation runs transparently on every request. There's nothing to configure, no extra parameters, and no opt-in required. If your request is safe (and the vast majority are), you'll never notice it's there.

If you're building applications that need to handle sensitive content — medical, legal, security research — RoutePlex's intentionally narrow blocking means your legitimate use cases work without interference.

Available on All Plans

Content moderation is included on every plan, including the free Evaluation tier. There's no premium moderation tier and no way to disable it. Safety is a baseline, not a feature.